The more data is gathered by automation, the more teams freeze when faced with one question: What action comes next? Reporting is all-encompassing, but many companies lose the ability to act decisively. Automation without architecture does not bring progress; it multiplies uncertainty.

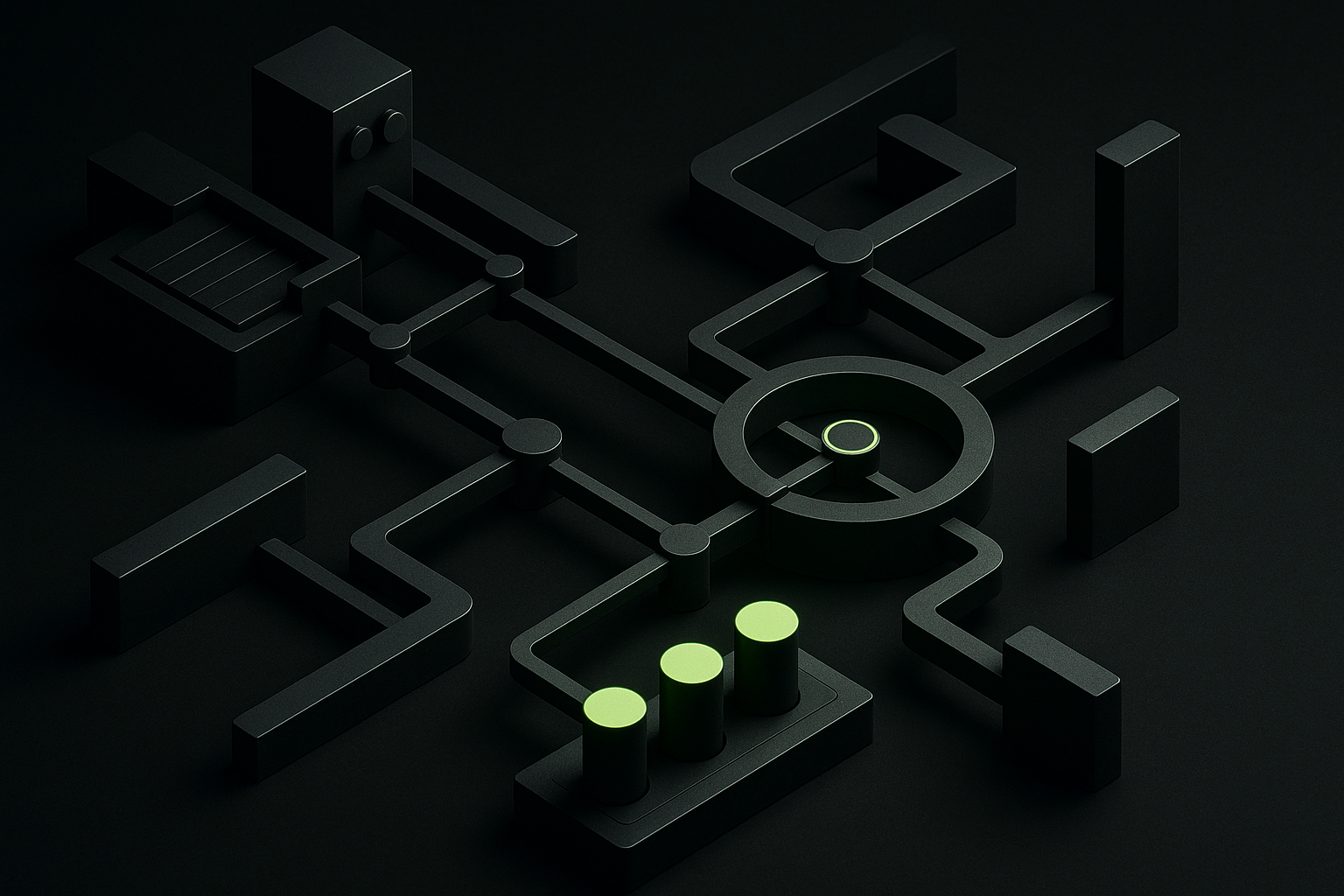

Complex systems don’t solve simple problems

Organizations load up ML stacks, but often lose track of core process metrics in the process. Solution complexity muddles the company’s real bottlenecks—slowing down business where rapid reaction is the real value driver. The result: Key decisions come slower precisely where speed delivers a measurable edge.

Complexity masks operational gaps—until the cost of failure becomes explicit.

Without precise process mapping and control of data flows, tech upgrades become costly liabilities. Especially in regulated fields, these feedback loops turn into expensive cycles where technology dictates the pace but no longer drives business.

Intransparent data, real-world impact

Automation breeds black-box architectures: flows whose logic operating teams can no longer decipher. The more isolated and proprietary data transformation becomes, the less knowledge remains inside the org. Fault lines hide in invisible interfaces, user trust collapses beneath the surface.

- Shadow processes multiply across uncoordinated data silos.

- Analysis mistakes stay hidden without full traceability.

- Migration becomes high risk when context knowledge is missing.

Example: At an aerospace group, unclear data flows paused entire production lines — every correction added days to project delivery.

Simple isn’t enough: The cost of UX reduction

An interface that smooths everything over uncovers no break in real process flow.

The obsession with 'intuitive' interfaces creates a myth of control: decision-makers assume everything is handled, while vital details vanish into oversimplification. Operational risks go unaddressed, simply because they’re hidden. The danger peaks when workflows get mapped into UIs that can’t show real-world variability.

Optimal interface design demands deep process awareness at the UX level. Show the wrong metrics and confusion spreads; hide too much and conversion sinks. Reducing ‘friction’ does not mean architectural win—it’s operational tranquilizer.

Monoliths build bottlenecks, not speed

Centralizing business functions in a monolith is often pitched as efficiency. In practice, it causes coordination gridlock: every small subsystem change requires a stack-wide rewrite, and integrating external partners gets painful. Modularity becomes wishful thinking.

- Accept no end-to-end platform covers every edge case.

- Design for loose coupling, not rigid control.

- Structure ownership by module—not by linear process.

Real speed comes only when architecture allows domain-level control. Only with intentional decoupling and explicit integration points can processes adapt with change.

Wrong technologies amplify error

The risk in tech selection is not missing features, but poor fit: tools deployed for their presence, not because they match context, build tech debt. Legacy pieces block adaptation where custom response is required. Re-platforming often reveals the underlying problem: lack of a shared process snapshot.

Only technology with a proven productivity benefit in context truly scales for operations.

Automation unaligned with actual strategies creates phantom growth: expensive and complex, but devoid of substance. It’s the architecture underneath that determines whether new tech creates value—or compounds existing chaos.

The distinction between reporting and decision-making is architectural—ignore it, and you scale opacity, not control.